Discussion

Simon Willison’s Weblog

comandillos: I've been using Qwen3.5-35B-A3B for a bit via open code and oMLX on M5 Max with 128Gb of RAM and I have to say it's impressively good for a model of that size. I've seen a huge jump in the quality of the tool calls and how well it handles the agentic workflow.

mentalgear: I understand the 'fun factor' but at this point I really wonder what this pelican still proofs ? I mean, providers certainly could have adapted for it if they wanted, and if you want to test how well a model adapts to potential out of distribution contexts, it might be more worthwhile to mix different animals with different activity types (a whale on a skateboard) than always the same.

simonw: That's why I did the flamingo on a unicycle.For a delightful moment this morning I thought I might have finally caught a model provider cheating by training for the pelican, but the flamingo convinced me that wasn't the case.

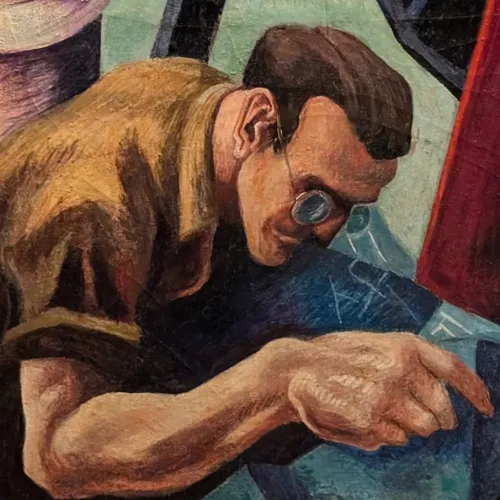

prodigycorp: To me the opus flamingo is waaaay better than the qwen one. qwen has the better pelican, though.

19qUq: How about switching to MechaStalin on a tricycle? It gets kind of boring.

mvanbaak: boring ... the ways all the models fail at a simple task never gets boring to me

aliljet: I'm really curious about what competes with Claude Code to drive a local LLM like Qwen 3.6?

smashed: OpenCode?

akavel: r/LocalLlama is now doing a horse in a racing car:https://redd.it/1slz38i

jbellis: For coding, qwen 3.6 35b a3b solved 11/98 of the Power Ranking tasks (best-of-two), compared to 10/98 for the same size qwen 3.5. So it's at best very slightly improved and not at all in the class of qwen 3.5 27b dense (26 solved) let alone opus (95/98 solved, for 4.6).

__natty__: You compare tiny modal for local inference vs propertiary, expensive frontier model. It would be more fair to compare against similar priced model or tiny frontier models like haiku, flash or gpt nano.

ericd: Eh it’s important perspective, lest someone start thinking they can drop $5k on a laptop and be free of Anthropic/OpenAI. Expensive lesson.

javawizard: Not when the article they're commenting on was doing literally exactly the same thing.

sailingcode: I'm an iguana and need to wash my bicycle in the carwash. Shall I walk or take the bus?

throwuxiytayq: I literally cannot believe that people are wasting their time doing this either as a benchmark or for fun. After every single language model release, no less.

sharkjacobs: It feels like the results stopped being interesting a little while ago but the practice has become part of simonw's brand, and it gives him something to post even when there is nothing interesting to say about another incremental improvement to a model, and so I don't imagine he'll stop.

furyofantares: It is completely wild to me that you prefer Qwen's flamingo. I think it's really bad and Opus' is pretty good.

simonw: The Opus one doesn't even have a bowtie.

furyofantares: The Opus one looks like a flamingo, and looks like it's riding the unicycle. Sitting on the seat. Feet on the pedals.The Qwen one looks like a 3-tailed, broken-winged, beakless (I guess? Is that offset white thing a beak? Or is it chewing on a pelican feather like it's a piece of straw?) monstrosity not sitting on the seat, with its one foot off the pedal (the other chopped off at the knee) of a malmanufactured wheel that has bonus spokes that are longer than the wheel.But yeah, it does have a bowtie and sunglasses that you didn't ask for! Plus it says "<3 Flamingo on a Unicycle <3", which perhaps resolves all ambiguity.

stephbook: They're certainly aware of the test, but a turtle doing a kickflip on a skateboard? I seriously doubt they train their models for that.https://x.com/JeffDean/status/2024525132266688757If anything, the disastrous Opus4.7 pelican shows us they don't pelicanmaxx

refulgentis: I liked both of Opus' better, it was very illuminating, in both cases I didn't see the error's Simon saw and wondered why Simon skipped over the errors I saw.Pelican: saturated!

monksy: Game over opus

bitwize: I think I found the leaked Claude Mythos version of the turtle benchmark: https://www.youtube.com/watch?v=l82XWTKLZuk

BoorishBears: This is a gag that's long outlived its humor, but we're in a space so driven by hype there are people who will unironically take some signal from it. They'll swear up and down they know it's for fun, but let a great pelican come out and see if they don't wave it as proof the model is great alongside their carwash test.

ericpauley: Going to have to disagree on the backup test. Opus flamingo is actually on the pedals and seat with functional spokes and beak. In terms of adherence to physical reality Qwen is completely off. To me it's a little puzzling that someone would prefer the Qwen output.I'd say the example actually does (vaguely) suggest that Qwen might be overfitting to the Pelican.

irthomasthomas: It's a 3B model. It should not be this close. Debating the artistic qualities in detail is missing the point.

nba456_: Good reminder that these tests have always been useless, even before they started training on it.

yieldcrv: All those models that were just at version 1.x in 2024That’s so wild

cedws: It’s not a waste of time. As the boundaries of AI are pushed we increasingly struggle to define what intelligence actually is. It becomes more useful to test what models cannot do instead of what they can. Random tasks like the pelican test can show how general the intelligence really is, putting aside the obvious flaw that the labs can optimise for such a simple public benchmark.

chabes: OpenCode or Pi are popular agent harnesses. Lots of IDEs integrate LLMs now. I believe there’s also a Qwen Code that exists, but I have yet to try it.

wongarsu: Qwen's flamingo is artistically far more interesting. It's a one-eyed flamingo with sunglasses and a bow tie who smokes pot. Meanwhile Opus just made a boring, somewhat dorky flamingo. Even the ground and sky are more interesting in Qwen's versionBut in terms of making something physically plausible, Opus certainly got a lot closer

kmacdough: Given adherence is a more significant practical barrier, it's probably the better signal. That is, if we decide too look for signal here.

doobiedowner: Is "signal" the new word you weirdos grossly abuse?... The new "optics"?

kburman: looks like opus have been nerfed from day1

Marciplan: I also can't understand how this goes so viral every time on Hackernews lol

BobbyJo: The fundamental challenge of AI is preventing unprompted creativity. I can spin up a random initialization and call all of it's output avante garde if we want to get creative.

Havoc: Between the legs and the beak I'd still rate the opus pelican higher

ineedasername: On thinking about the reasons this may be something at least slightly more than training on the task is the richness with which language is filled with spatial metaphors even in basic language not by laymen considered metaphor outside the field of linguistics proper, in which concepts eg Lakoff's analysis in "Metaphors we Live By and others are simply part of the field, (though unsurprisingly, among the HN crowd I've occasionally seen it brought up)The amount of money you have in the bank may often "increase" or "decrease" but it also goes up and down, spatial. Concepts can be adjacent to each, orthogonal. Plenty more.So, as models utilize weight more densely with more complex strategies learned during training the patterns & structure of these metaphors might also be deepened. Hmmm... another thing to add to the heap of future project-- trace down the geometry of activations in older/newer models of similar size with the same prompts containing such metaphors, or these pelican prompts, test the idea so it isn't just arm chair speculation.

Quarrelsome: Maybe the next time we suspect they're optimising for the test, switch the next test to drawing "the cure for cancer".

monocasa: 35B, but your point stands I think.

userbinator: I recently fell down the rabbithole of AI-generated videos, and realised that many of the "flaws" that make them distinctive, such as objects morphing and doing unusual things, would've been nearly impossible or require very advanced CGI to create.

kube-system: Qwen, at least, can draw a complete bicycle frame. The opus frame will snap in half and can’t steer.

quux: This is a useless benchmark now a days, every model provider trains their models on making good pelicans. Some have even trained every combination of animal/mode of transportation

ralph84: You can just straight up ask Opus if it's good at generating images and it will say no. It has never been marketed as being for image generation.

solarkraft: If I (commercially) made models I’d put specific care into producing SVGs of various animals doing (riding) various things ... I find it interesting how confident you seem to be that they’re not.

atonse: Wonder what would happen if we unleashed Karpathy’s autoresearch on the pelican bicycle test. And had it read back the image to judge it.Oh maybe it might continue to iterate on the existing drawing?

bschwindHN: I do wonder how much energy collectively has been burned on this useless "benchmark".

simonw: Claude is actually very good at SVGs, and it's genuinely useful. I have Claude knock out little SVG icons all the time.Illustrations with SVGs of pelicans riding bicycles will never be useful, because pelicans can't ride bicycles.

henry2023: More and more I suspect OpenAI is generating comments on HN to try shift the discussion.I’m not sure you’re a bot but this is the stereotypical comment being overly critical of anything where OpenAI is not superior or being overly supportive (see comments on the Codex post today) while clearly not understanding the discussed topic at all.

SJMG: [delayed]

itake: "artistically interesting" is IMHO both a subjective and 'solved' problem. These models are trained with an "artistically interesting" reward model that tries to guide the model towards higher quality photos.I think getting the models to generate realistic and proportional objects is a much harder and important challenge (remember when the models would generate 6 fingers?).

simonw: Google Gemini featured a bunch of examples of exactly that in their release video for 3.1 Pro: https://x.com/JeffDean/status/2024525132266688757

tpm: The Opus bike isn't very physically plausible though.

gistscience: Yeah I can imagine these popular benchmarks get special treatment in the training of new models. I wonder how they would perform for "Elephant riding a car" or "Lion sleeping in a bed"

hopinhopout: LLM's really causing serious brainrot if html pelican drawings are a usage basis for your programming projects, even all these shitty benchmarks don't say or mean anything if companies secretly tweak them on the go

wongarsu: Most of the 'coding benchmarks' are deeply flawed too. This one at least makes it explicitAnd so far, the ability to make SVGs of $animal on $ vehicle seems to correlate surprisingly well with model 'intelligence'

throwuxiytayq: The whole point of this benchmark is that it asks the model to work in a modality it is not trained in and does not understand well. The result is largely meaningless. This is just like the people who are endlessly surprised by the fact that a raw LLM does not work with numbers well, or miscounts letters. In short, this test benchmarks the intelligence of the person running it, not of the model.

spwa4: It is much faster though. On my m1 max, describing a picture (quick way to get a pretty large context):Qwen 3.6 35b a3b: 34 tok/secQwen 3.5 27b: 10 tok/secQwen 3.5 35b a3b: doesn't support image input